Artificial intelligence (“AI”) has become the latest buzzword for stamping ‘technological advancement’ on just about anything. But as we all know, the devil is in the details and when it comes to AI, the details really matter. In its most basic form, AI enables computers to perform tasks traditionally considered distinctive to humans – such as decision-making and problem solving – and in doing so, may appear to enable deeper understanding. AI-based applications come in various forms, some enhancing everyday technology, others emerging as new technology. Yet, as one digs deeper, questions arise as to how intelligence with ‘artificial’ in its name competes with the human ingenuity that created it.

AI-Enabled Technology

An interesting example of how AI advances an existing technology is in its application to facial recognition. Automated facial recognition (“AFR”) was pioneered in the early 1960s based on comparing a set of 20 facial distances – such as separation of the pupils and width of the mouth – to a facial database. At the time, these distances were measured manually by human operators, until the 1970s when the process was automated for wider application, albeit with limited success.

A turning point came in the 1990s when the Departments of Motor Vehicles (“DMVs”) in West Virginia and New Mexico began using facial recognition to prevent the issuance of multiple licenses to a person applying with a fictitious name. Their success led to DMV offices across the U.S. creating one of the first major markets for AFR technology. The key to success was in the data. Earlier applications had failed because they lacked a large facial database (the ‘real world’) from which to ‘learn’ artificially. In other words, without training on real data, computers couldn’t dependably recognize one face from another based on a small set of distance metrics. DMV databases were sufficiently robust to ‘teach’ AFR systems to find matches accurately and efficiently, and to naturally self-calibrate over time as more faces were pulled into the database.

Arguably, these decades-old AFR systems were a very early form of AI, with computers learning a human task too rigorous for humans to tackle manually on their own. Fast forward to the 2020s where AI has developed on its own, and its application has accelerated.

Figure 1 – Examples of AI-based facial recognition applied to detect human emotion. Source: Zhou and Xiao (2018): 3-D Face Recognition: A Survey. Human Centric Computing and Information Science, 8 (35).

Today’s AI-enabled AFR systems have advanced from recognizing a human face to interpreting human emotion, also called ‘emotion recognition’. Some examples are shown in Figure 1. These AI models use the same facial metrics to predict emotional state with reasonable accuracy. This became practical – not because AI-models did not exist earlier – but because the number of possible face-emotion combinations increases exponentially. With more data, faster computers, and the development of AI-specialized chips and computer architectures, today’s AI models can harness the computational power they need to solve these more complex problems.

The evolution of AFR systems illustrates the rapid growth potential of AI and how it can repurpose and/or reinvent legacy technologies. According to a study by “Deloitte AI Institute (2021)”, the market for facial recognition has grown from a $3.8B to an $8.5B industry in the last five years. Yet, the market for the more complex problem of emotion recognition is expected to grow to over $37B by 2026. After all, if computers can be trained to identify you and your state of mind in real-time, AI begins to feel much more human. In the retail space, you could remotely measure customer satisfaction. In the security space, you could discreetly detect nervous or suspicious behaviors. In the health and wellness space, you could safely monitor the well-being of hospital patients. The possibilities feel endless.

AI Learning Versus Human Learning

Human intelligence and the learnings gained by AI models are inherently different, the latter being truly artificial. Humans learn in a self-directed way, whereas AI models must be built and trained by humans to perform a specific task. In much the same way a dog can be trained to sit down and then receive a treat, an AI model can be trained on real-world data without knowing or understanding the real world. Just as that dog has no concept of why sitting earns her a treat, an AI model doesn’t know or care why it’s been trained to achieve an outcome nor its place in the real world, it just acts automatically and, in a sense, ‘instinctively’ on its training.

Generative AI or “GenAI” models are distinct in their ability to create ‘new data’ – such as a synthetic face that does not exist in the real world but has all the qualities and characteristics of a real human face. GenAI models and the pseudo-reality they create begin to feel almost super-human, yet even generative models cannot do the job well without vast and robust databases upon which to train and improve.

This imparts two important limitations on AI’s ability to advance on existing technology:

First, AI models are only as good as the data from which they’re trained, or as they say, “garbage in = garbage out”. The more training data and the higher its quality, the better AI will perform its task. There is a danger of gaining false confidence in an AI model that happily makes a prediction and acts on it, even if the prediction is not dependable because it was trained on sparse or low-quality data. When facial recognition was introduced in the 60s, it failed to gain traction because facial databases did not exist at the time. So-called ‘deep learning’ – and its ability to leverage efficiencies of the human via neural networks – is only possible when trained on vast volumes of data.

A second limitation is embedded in the nature of AI itself, as the learning process is truly artificial. AI models are blind to any physical understanding of what they’re doing and how it applies in the real world. Again, returning to the example of emotion recognition, consider the emotion of shock or surprise (see Figure 1[i]). The biological sciences have unlocked the facial physics behind this emotion – namely the gasp causing our mouths to open is a fast deep breathing technique that evolved in primitive humans to provide an extra burst of oxygen to help self-manage shocking events. A computer programmed with the human biology of facial movement could reproduce a human gasp. A model capable of that sounds quite daunting. But, if AI models are exposed to enough faces showing a full range of human emotion, they can detect, analyze, recognize and even predict shock or surprise without any physical knowledge of why it happens or how it fits in the real world.

These limitations remind us that like most new technologies, AI models come with both strengths and potential pitfalls. So, as AI-based tools emerge in our daily lives, we must remain diligent in how they’re being used and how accurately they mimic the real world. That said, just as does the human mind, AI applications will naturally improve and evolve as they are exposed to more and better real-world data.

Modeling the Weather with AI

AI-based systems develop from an ‘algorithm’ that in some ways learns like a human – from experience. Through AI-enabled apps, our cell phones feed us more and more relevant information as they ‘learn’ your patterns and preferences. But more complex fields inclined to use AI – such as detecting human emotion – require much more data. The power of AI is that complexity itself is not a roadblock, as one can simply put more computers and faster chips to work on these more complex systems.

An example is in AI-based weather modeling. Like AFR systems, computerized weather forecasting – also known as numerical weather prediction or “NWP” – has been around since the 1960s, informing a vast array of economic, commercial and social domains. NWP models are the basis for modern-day weather forecasts and projections around climate change.

In the 1990s, NWP ‘ensembles’ were introduced, expanding a single forecast to a set of forecast scenarios. For example, while an early NWP model might have predicted tomorrow’s high temperature of 75 degrees, an ensemble might offer a set of 100 or more potential future outcomes, thereby enhancing the prediction of 75 with an ensemble range of 72 to 78 degrees.

The ensemble adds value by telling us how confident we can be in the forecast itself (i.e., a range of 62 to 88 is much less confident), thereby adding ‘intelligence’ to the forecasting system.

But NWP ensembles are expensive – they extend a single forecast to an ensemble set by brute force, such that an ensemble of 100 forecasts will take almost 100x longer – or require about 100x more computing power to produce an ensemble in the same amount of time. Yet, the cost is well worth the computational expense, as weather modeling experts would argue the value proposition of ensemble modeling and the predictive power it provides are compelling. That said, NWP ensembles do not harness the power of AI, as they are not trained on historical weather data and some can run with or without real world observations.

As with face and emotion recognition, AI-based models can be trained to make forecasts that rival NWP models. This is being done at some of the most advanced firms in the world. Google DeepMind has developed an AI-based weather forecast model called GraphCast which can make 10-day weather forecasts that approach or beat the precision and accuracy of the most advanced NWP models in the world.

As with many tools built at Google, the GraphCast model is open-source, making its algorithms freely available for use in research. In fact, the European Centre for Medium-Range Weather Forecasts (“ECMWF”) currently runs an experimental version of GraphCast to monitor and evaluate the skill of AI-derived global weather predictions.

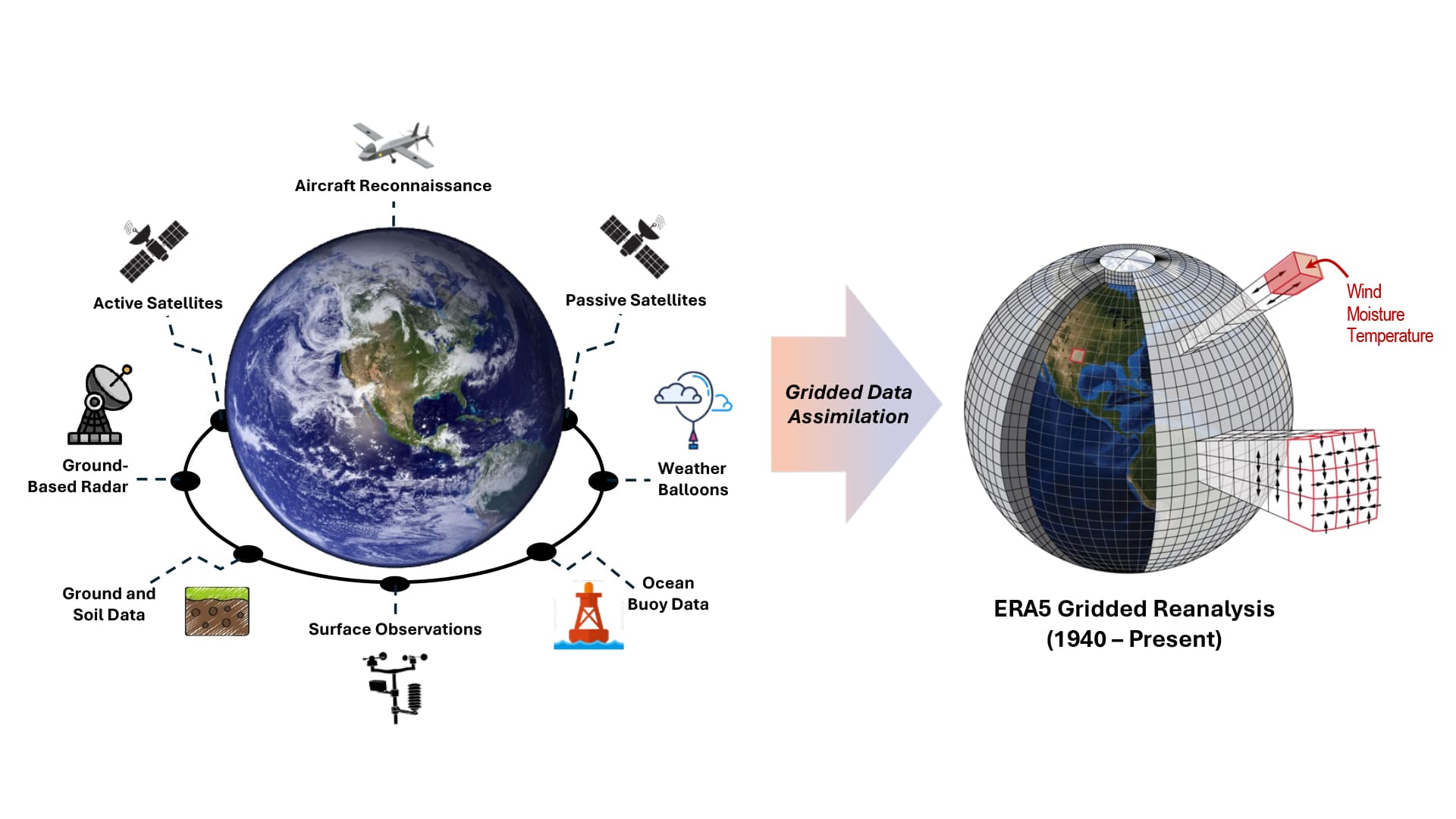

Figure 2 – The ECMWF Reanalysis Version 5 (ERA5) uses NWP modeling combined with gridded data assimilation systems to generate a 3-D gridded representation of the atmosphere. High quality data is available starting in 1940 such that the 3-D grid can be generated on a six-hourly timestep. The NWP model enforces physical relationships such that adjoining grid cells are physically consistent with how the atmosphere evolves over time. The final result is a database containing millions of grid cells at over 100,000 historical times. The ERA5 database is sufficiently large and robust to provide training to an AI weather model (Adapted from NOAA image.)

Unlike NWP models, GraphCast lacks physical equations that describe how the atmosphere operates in the real world. GraphCast forecasts future weather by training itself how historical weather patterns have evolved over time, using the ECMWF Reanalysis Database Version 5 (“ERA5”) as a training dataset.

ERA5 contains weather data covering the Earth on 20×20-mile grids stacked vertically on 137 levels from the surface to 50 miles above surface. Data for each of these roughly 35 million grid cells is available every six hours from 1940 to present. This database containing 85 years of data for millions of grid cells is sufficiently large to provide high-quality training for the AI-based model.

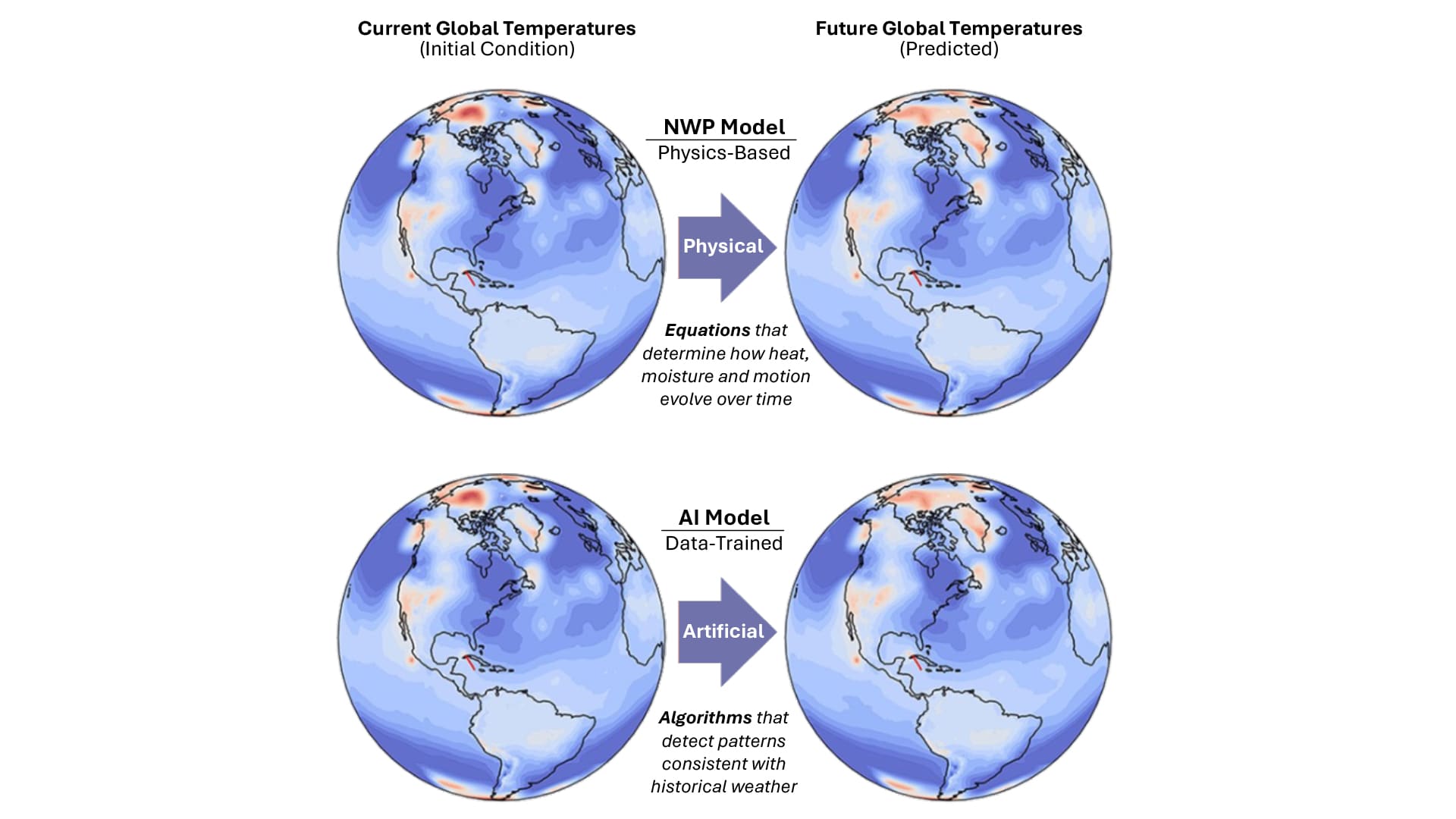

Figure 3 shows that both NWP and AI can generate realistic, and reasonably consistent future weather scenarios, however, their approaches are very different. NWP does the job using scientific equations that take the current weather and predict how it will change over time. As a result, NWP forecasts provide an estimate of future weather as well as the physical changes in the weather that explain the resulting forecast. The analogy to facial recognition is that a physical model can not only predict facial movements resulting from shock or surprise but can help explain why the human body reacts in that way. An AI-model can make as good and perhaps a better prediction (if well trained), but cannot ‘explain’ its predicted outcome. So, NWP provides the what and the why. AI weather models are trained on historical weather patterns which enable them to generate realistic and accurate weather forecasts—the what—but lack the physical ‘why’ behind their own predictions.

Figure 3 –Numerical weather prediction (“NWP”) models predict future weather using physics-based equations that implicitly ‘understand’ how earth’s atmosphere works. In contrast, AI-based weather models are trained to detect, analyze and predict the weather based on the patterns contained in large databases of historical weather (Adapted from NOAA image.).

Combining the Best of Both Worlds: NWP and AI

NWP has distinct advantages tied to the physics embedded within the model, but is computationally expensive, especially in its ensemble mode. AI can generate weather scenarios that lack the physics, but benefit greatly from efficiency. Can we combine the benefits without sacrificing their value?

The main constraint on NWP ensembles is the limit to how much computing resource is available, and in most cases, there is also a time constraint on when that forecast is released. At the National Hurricane Center (“NHC”) in Miami, Florida, ensemble forecasts for active hurricanes must be updated every three to six hours in order to provide the latest available information to the general public, emergency managers and other stakeholders. If it takes too long the information, no matter how detailed, becomes useless. Given NHC’s current computing resources, combined with forecast models available from other agencies worldwide such as the UK Met Office, generally about 100 to 150 individual scenarios are available with each six-hour update cycle. AI models can provide a much larger ensemble set in the same runtime using less computing power.

Forecast models can further benefit from the power of AI via forecast accuracy, which in the world of NWP requires increased spatial resolution of the ‘model grid’ (see Figure 2). Where enhanced NWP accuracy comes at an enormous computational expense, AI models can provide the same forecast quality on a finer-scale grid without sacrificing computer time.

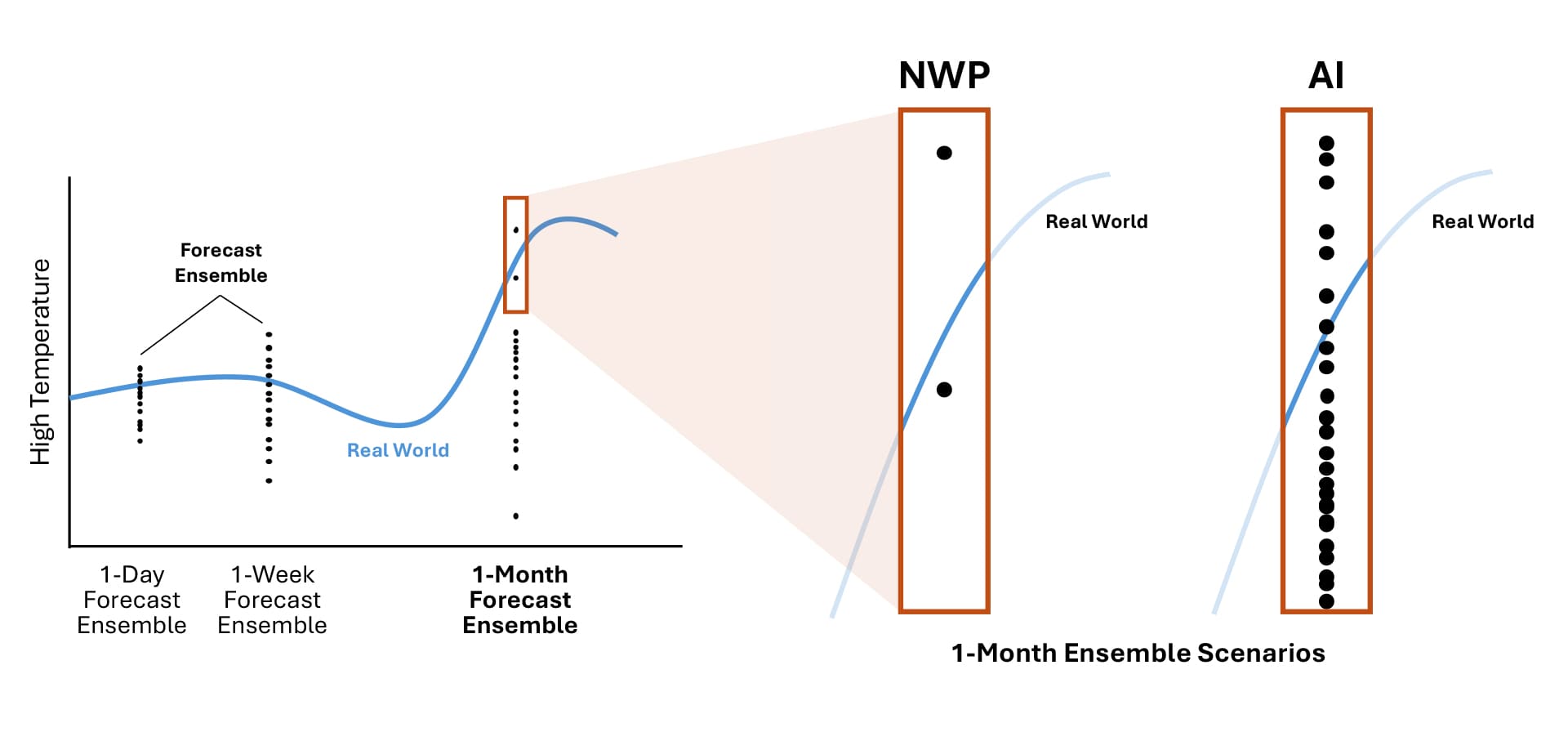

The prospective benefits of coupling NWP and AI are shown in Figure 4. NWP models use physics-based equations to generate weather forecasts. They require little more than the initial weather conditions at time = 0. Modern NWP adds value by producing forecast ensembles containing many individual future scenarios, but ensembles require drastically more resource to accomplish the task. In the case of hurricane forecasts, this means having 100-150 opinions about how the hurricane might develop and where it might strike.

Using the analogy of a simple temperature forecast for New York City, an NWP ensemble forecast may cluster closely around an accurate projection in the near-term, as shown in Figure 4. As the forecast grows longer, and uncertainty in the forecast increases, the spread in the ensemble broadens. In extended range forecasts, the spread becomes so wide that even a 150-member ensemble may not provide detail at the tail end of the ensemble distribution. This is important because the real world (observed weather) doesn’t always track through the middle of the forecast cluster. As we know, sometimes observed weather is extreme, making it important to have more reliable forecast scenarios that can fill the gaps in the forecast spread.

Figure 4 – Traditional numerical weather prediction models predict future weather through the use of physics-based predictive equations. They receive a starting or ‘initial’ condition and bounding conditions at the edges of the domain, but the future state of the model is based entirely on the atmospheric physics and fluid dynamics.

Here is where AI steps in to enhance an existing technology. Trained using huge volumes of historical weather and initialized with current weather observations, AI can develop an ‘ensemble of ensembles’, or a set of realistic scenarios spawned from a single NWP ensemble member.

Figure 5 shows that by enhancing an NWP ensemble in this way, one can generate much more detail, in particular where an NWP ensemble on its own falls short. This approach has the advantage of benefitting each modeling strategy with what it lacks individually. NWP models benefit from the physics and thermodynamics embedded in their foundation, but require a super-computing resource to generate ensembles within a reasonable time. AI models lack a true physical connection between the starting point and the resulting forecast but are computationally highly efficient. The two combined strike a more optimal balance between realism and efficiency, while providing decision-makers with a much more detailed and insightful forecast.

Figure 5 – AI-based weather models make predictions via AI-based pattern recognition equations trained with observations and reconstructed historical environments. These models evolve the initial condition through learning how atmospheric patterns have evolved historically, and can capture the evolution of the atmosphere without knowledge of physics or thermodynamics.

Why It’s Important to Aeolus

Aeolus Research has commenced working with GraphCast and other AI weather models to better anticipate the risks associated with extreme weather. Just as traditional NWP forecasts can only provide limited information about the ‘tail of the distribution’, catastrophe models provide a limited view of how strong and how often extreme events will occur in the future. Catastrophe modeling firms apply a combination of physical and statistical techniques to generate a large ensemble of potential future events. Using AI, and the added detail it provides, can aid in decision-making around risk selection, pricing, and an array of underwriting and operational risk factors.

In collaboration with our research partners at the State University of New York at Albany (SUNY Albany), early in 2025 we kicked off a study of severe convective storms (“SCS”) to better understand the risks associated with tornadoes, hailstorms and severe thunderstorms. The project will examine SCS risk using a coupled NWP-AI model similar to the one described above. While our initial application will be to SCS risk, GraphCast supports a wide range of weather risk applications including hurricane track prediction, the simulation of atmospheric rivers and associated winter storms, extreme temperatures, and the list goes on.

GraphCast works by taking as input the two most recent states of observed weather — at the current time and six hours prior — and predicts the next weather state six hours later. A single weather state is represented by a 0.25-degree latitude-longitude grid (720×1440 grids cover the Earth), corresponding to about a 15 square-mile resolution at the equator. Each grid point represents a set of atmospheric variables such as heat, moisture and winds.

Here, one of the AI model limitations is revealed – we can work with six-hourly time slices in the forecast output but cannot trace physically how we got from t=0 to t+6 hours since GraphCast is only trained to jump ahead in six-hour increments. In contrast, the NWP model runs on a time step of seconds to minutes, allowing one to retrace the six-hourly forecast to its roots six hours earlier. The same applies, to a much greater degree, when we seek to understand how one-day or one-week forecasts diverge. But when initialized with an NWP ensemble member, the AI model output is informed by NWP physics. This becomes critical when researchers aim to improve a weather model, validate its output and scrutinize its best and worst forecasts.

But while traditional NWP scales with compute time, capitalizing on the vast amount of historical weather data to improve accuracy is not always straightforward. Rather, NWP methods are improved by highly experienced experts innovating better physical relationships, assumptions and approximations, which can be a time-consuming and costly process.

Coupled NWP-AI models, also known as machine learning–based weather prediction (“MLWP”) are trained from historical data offering an alternative to traditional NWP. MLWP has the potential to dramatically improve forecast accuracy by capturing patterns that are not represented by the model physics. Such models also offer an opportunity to gain efficiency by exploiting modern AI-optimized hardware and strike a more favorable speed–accuracy balance.

In the next edition of this special series on AI, we’ll explore more deeply how GraphCast can be used in a specific risk assessment application. Coupled in an NWP-AI framework, GraphCast can simulate environmental conditions that are primed to generate severe thunderstorms in the U.S. In doing so, one can envision a tool that supplements existing catastrophe models with a larger more robust catalog of future thunderstorm events, some of which contain catastrophic outbreaks of hailstorms and tornadoes. Given the recent uptick in severe convective storms, and the notion that climate change may be modulating the risk in ways that are increasingly difficult to detect using conventional NWP, this AI application is bound to gain attention.

DISCLAIMER

THE INFORMATION CONTAINED IN THIS REPORT (THIS “REPORT”) IS BEING FURNISHED BY AEOLUS CAPITAL MANAGEMENT LTD. (“AEOLUS”) FOR INFORMATIONAL PURPOSES ONLY AND MAY NOT BE USED FOR ANY OTHER PURPOSE. THIS REPORT CONTAINS AEOLUS AND ITS AFFILIATES’ VIEWS ON VARIOUS UNCERTAIN SCIENTIFIC CONCEPTS AND CONTENT. RECIPIENTS AND THEIR ADVISORS SHOULD PERFORM THEIR OWN INDEPENDENT REVIEW WITH RESPECT TO SUCH MATTERS AND REACH THEIR OWN INDEPENDENT CONCLUSIONS.

WHILE EFFORT AND CARE HAS BEEN TAKEN IN PREPARING THE CONTENT OF THIS REPORT, AEOLUS AND THE AUTHORS DISCLAIM ALL WARRANTIES, EXPRESSED OR IMPLIED, AS TO THE ACCURACY OF THE INFORMATION SET OUT HEREIN. FURTHER, NEITHER AEOLUS NOR THE AUTHORS SHALL BE LIABLE FOR ANY LOSSES OR DAMAGES ARISING FROM THE USE OF, OR RELIANCE ON THE INFORMATION OR THE REFERENCES SET OUT HEREIN.

PORTIONS OF THIS REPORT HAVE BEEN OBTAINED FROM THIRD-PARTY SOURCES. WHILE SUCH INFORMATION IS BELIEVED TO BE RELIABLE BY AEOLUS AND THE AUTHORS, NO EXPRESS OR IMPLIED WARRANTY, REPRESENTATION OR GUARANTEE IS MADE AS TO THE CORRECTNESS, COMPLETENESS OR SUFFICIENCY OF SUCH THIRD-PARTY INFORMATION. WHERE A THIRD-PARTY RESOURCE HAS BEEN REFERENCED, AEOLUS SEEKS TO ACCURATELY CITE SUCH RESOURCE. NO CONTENT WITHIN THIS REPORT IS KNOWINGLY AN INFRINGEMENT OF COPYRIGHT. ANY POTENTIAL INFRINGEMENT CAN BE IMMEDIATELY ADDRESSED AND WHERE APPROPRIATE RECTIFIED, ON NOTIFICATION TO AEOLUS.

THIS REPORT CONTAINS THE CURRENT OPINIONS OF AEOLUS, WHICH ARE SUBJECT TO CHANGE WITHOUT NOTICE. AEOLUS TAKES NO RESPONSIBILITY FOR UPDATING THE INFORMATION CONTAINED HEREIN AS FACTS OR AEOLUS’ VIEWS DEVELOP IN THE FUTURE. STATEMENTS IN THIS REPORT ARE MADE AS OF THE DATE OF THE INITIAL COMMUNICATION AND THERE SHALL BE NO IMPLICATION THAT THE INFORMATION HEREIN IS CORRECT AS OF ANY TIME SUBSEQUENT TO SUCH DATE.